53% Recall: Why Microsoft's Own AIOps Confirms the Engineer Is Still Essential

Microsoft just published an engineering paper on ActionNex, a "Virtual Outage Manager" deployed in pilot on Azure that ingests multimodal operational signals, compresses them into critical events, and recommends mitigation actions. It's exactly the kind of system the industry has been selling for years as the end of human on-call: an agent that sees the incident, remembers past incidents, and tells you what to do.

The interesting thing about the paper isn't the architecture — the perception layer, hierarchical memory, reasoning agent are essentially state of the art for serious agentic systems. What matters are the reported numbers across eight real Azure outages, 8M tokens, 4,000 critical events:

- Precision: 71.4%

- Recall: 52.8–54.8%

And here's where, as a CTO and as someone who has carried on-call to production at enterprise scale, I stop and say: this is the clearest empirical proof you can give in 2026 that AI in operations is implementable, but not substitutive.

What These Numbers Mean in the Trenches

Let me translate the numbers into on-call language:

Precision of 71.4% means that out of every 10 actions ActionNex recommends, roughly 3 are wrong. Not marginally suboptimal — wrong. In a real outage, where every action mutates system state, executing 3 of 10 recommendations without a human filter is like having a brand-new on-call engineer you wouldn't yet give production permissions to in their first month.

Recall of 53% is the more consequential number. It means that nearly half of the correct actions a human team executed during those outages, the system did not propose. Not errors — failures to see them. Half of the mitigation judgment needed to resolve an Azure incident falls outside the model's output.

If you're the CTO and the vendor's stance is "let AI carry the on-call rotation," the immediate read is: AI will carry the first half. The second half — the half that distinguishes a partial mitigation from a full service restoration — is still being carried by the engineer.

Why These Numbers Don't Scale With More Time

The optimistic counter is "yeah, but those are pilot numbers, they'll improve." I'm skeptical for three concrete reasons I see every week running DevOps at a company with non-trivial infrastructure.

First: the long tail of incidents doesn't repeat. ActionNex has an episodic memory of prior incidents. Anything that falls into known patterns has a high probability of being right. But in complex systems, an important fraction of the outages that matter are novel combinations — the incident your infrastructure has never seen before in this particular combination of regions, dependencies, and deploy state. Aggregate numbers hide the fact that precision and recall on novel incidents are systematically worse than on recurring patterns. Senior SREs don't cost what they cost because they remember runbooks; they cost what they cost because they recognize patterns that weren't in the runbook.

Second: the model's incentives don't align with the asymmetric cost of a wrong action. In production, a wrong action during an outage isn't a wrong prediction — it's a mitigation that potentially worsens blast radius. The cost of a false positive (executing an unnecessary or harmful action) and the cost of a false negative (missing the right action) are not symmetric, and the training objectives of most reasoning models don't distinguish them well. You can push precision up by raising the threshold, but then recall drops further. There's no easy way out with more data alone.

Third: runtime verification isn't free. One of the implicit findings of the paper is that "human-executed actions" are the feedback that improves the system. Plainly: the system improves because the engineer keeps judging every recommendation. If you take the engineer out of the loop, you also remove the learning signal. AI in operations needs the engineer not just as a safety net, but as a source of gradient.

The Contrast with the "Self-Managing" Vision Paper

I've been reading in parallel a late-2025 IEEE vision paper, AI-Driven DevOps for Intelligent Automation in Continuous Software Delivery Pipelines (Kiran Raj K M et al., ICECMSN 2025), which describes the future of the discipline like this:

"...emerging technologies such as generative AI enable fully automated pipeline capable of code generation, error detection, deployment, and performance monitoring with minimal human intervention. ... a future where software systems evolve into self managing, self improving ecosystems."

It's the language I hear every quarter at vendor steering committees. Minimal human intervention. Self-managing. Self-improving. And as a CTO, you have to know how to translate it.

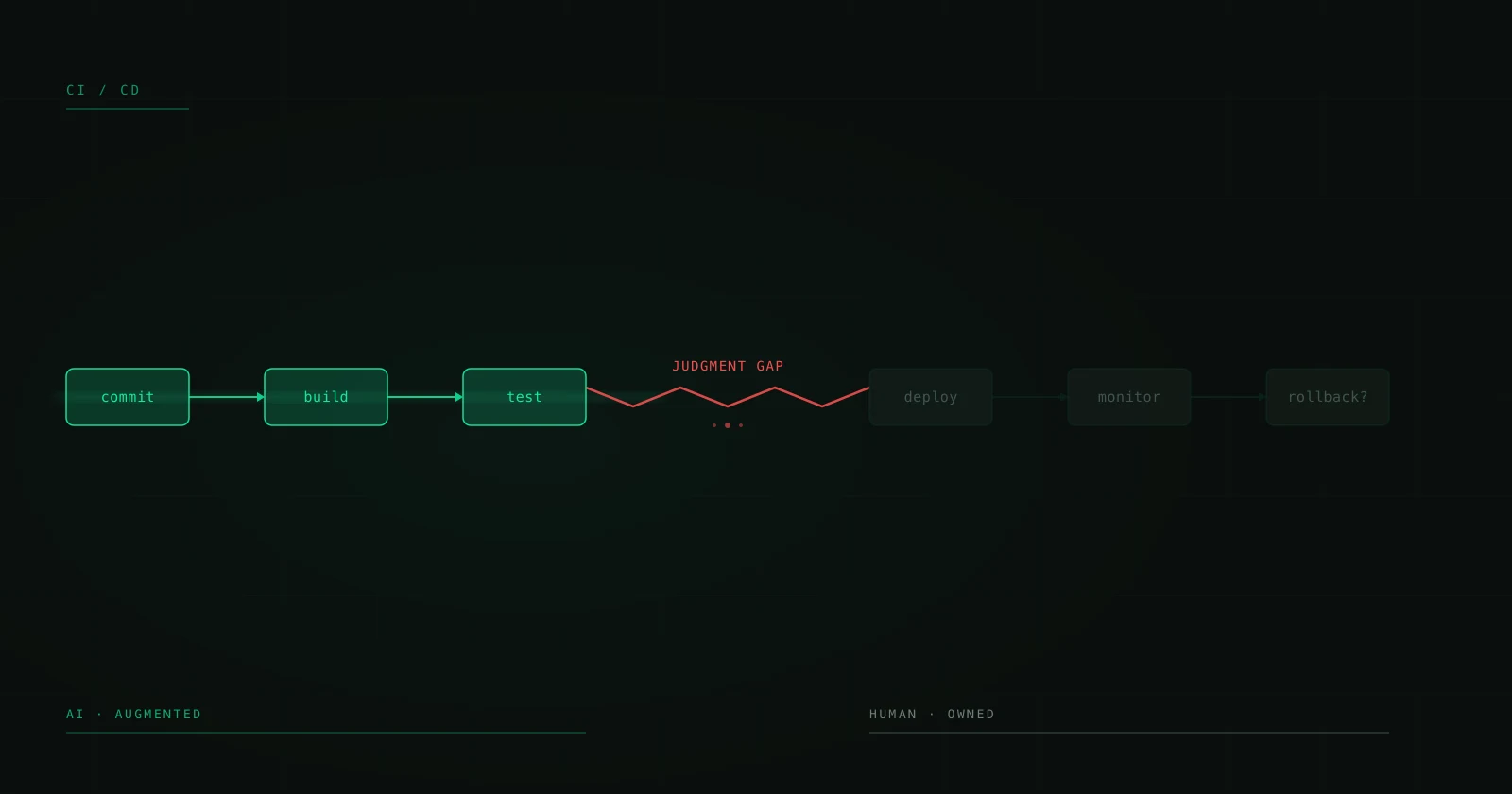

Put the ActionNex paper and the IEEE paper side by side and the asymmetry is unavoidable: one is the empirical reality of the best in-pilot system at one of the largest clouds in the world; the other is the aspirational vision. The distance between the two is exactly the space where your engineers live. And it's wide. A 53% recall is not a "self-managing" system; it's a system that needs a human for the remaining 47% of any non-trivial decision.

What Changes (and What Doesn't) in a Mature DevOps Practice

Let me be clear: ActionNex and similar systems are useful. I'd implement them. But with the seams that operational maturity demands.

What changes:

- First triage can be meaningfully accelerated. Correct recommendations are correct; in an outage the clock is expensive, and having an agent that offers you the most likely hypotheses before you open the dashboard is real value.

- Historical pattern discovery stops depending on human memory. Episodic memory is exactly the thing humans do worst — recalling which similar incident happened sixteen months ago and which mitigation worked. Here AI clearly beats the human.

- Post-mortem documentation can be produced with much less friction. What used to be three days of writing can become a draft reviewed in an hour.

What doesn't change:

- Authority to take action remains human. No serious 2026 governance mechanism should authorize an agent to execute large-blast-radius actions without explicit human approval for 100% of cases with confidence < 99%, and calibrated 99% confidence on novel incidents is a fantasy.

- Incident ownership remains human. Someone has to answer the postmortem. AI doesn't answer postmortems. That's the line that doesn't move with a model upgrade.

- Judgment about what's acceptable to degrade remains human. The question "what do we let drop so the rest survives" depends on business context, customer, contract. AI can propose candidates; the human decides.

The Question to Ask When Someone Sells You "Autonomous" AIOps

When a vendor or consultant pitches an AIOps system with "self-healing" framing, the question isn't "what's your precision?" The question is:

"In your validation set, of the cases where the system decided not to act, in how many was the correct call to not act?"

That's recall on the complement. Most vendors won't give you the number, because it's not pretty. ActionNex, credit to Microsoft, publishes it indirectly: 47% of the cases where there was a correct action, the system didn't propose it. Not "didn't act" — didn't see it. It's an honest number. Most commercial demos show you the subset where the system shines.

That's the diagnostic question: if the vendor can't answer it, you're not buying an operations system, you're buying a demo.

What I'd Recommend This Quarter

Three concrete actions for a CTO or head of DevOps under pressure to "automate on-call with AI":

- Implement AIOps as a copilot, not as an operator. The agent suggests; the engineer approves and executes. No action with blast radius beyond a reversible change is autonomously delegated. This line is written into policy, not into the runbook.

- Measure recall on novel incidents, not the aggregate set. If your vendor only reports aggregate numbers, demand the split between recurring patterns and novel cases. The gap is usually dramatic.

- Invest in the engineer as a source of gradient. Treat each human mitigation decision as training data. Your SRE team's value isn't only resolving incidents; it's generating the corpus that makes the copilot better. If you treat them as substitutable, you also lose the improvement loop.

The line I defend is simple and now empirically supported: AI in DevOps is implementable and has clear ROI as augmentation. It's not substitutive. The ActionNex paper isn't proof of AI's failure — it's proof that the most capable AIOps vendors in the world, with the best data and the best engineers, still build systems that need the engineer for the remaining 47%. If that's true for Microsoft on Azure, it's true for your infrastructure, almost certainly with more modest numbers.

The engineer isn't being substituted. They're being leveraged. The teams and CTOs that understand the difference are the ones who'll ship reliable systems this decade.

Sources:

- Lin, Z., Hu, H., Hao, M., et al. ActionNex: A Virtual Outage Manager for Cloud Computing. arXiv:2604.03512 (2026). arxiv.org/abs/2604.03512

- Kiran Raj K M, Karthik K Poojary, Abhay, Aishwarya R S, Lathesh Kumar S R. AI-Driven DevOps for Intelligent Automation in Continuous Software Delivery Pipelines. ICECMSN 2025. DOI:10.1109/ICECMSN68058.2025.11382867

Building DevOps/SRE capacity and need senior engineers who know where AI augments and where it doesn't? Talk to a CTO about deploying a nearshore squad with real operational maturity, not just vendor certifications.